Iris: Building an Experimentation Platform From 0-to-1

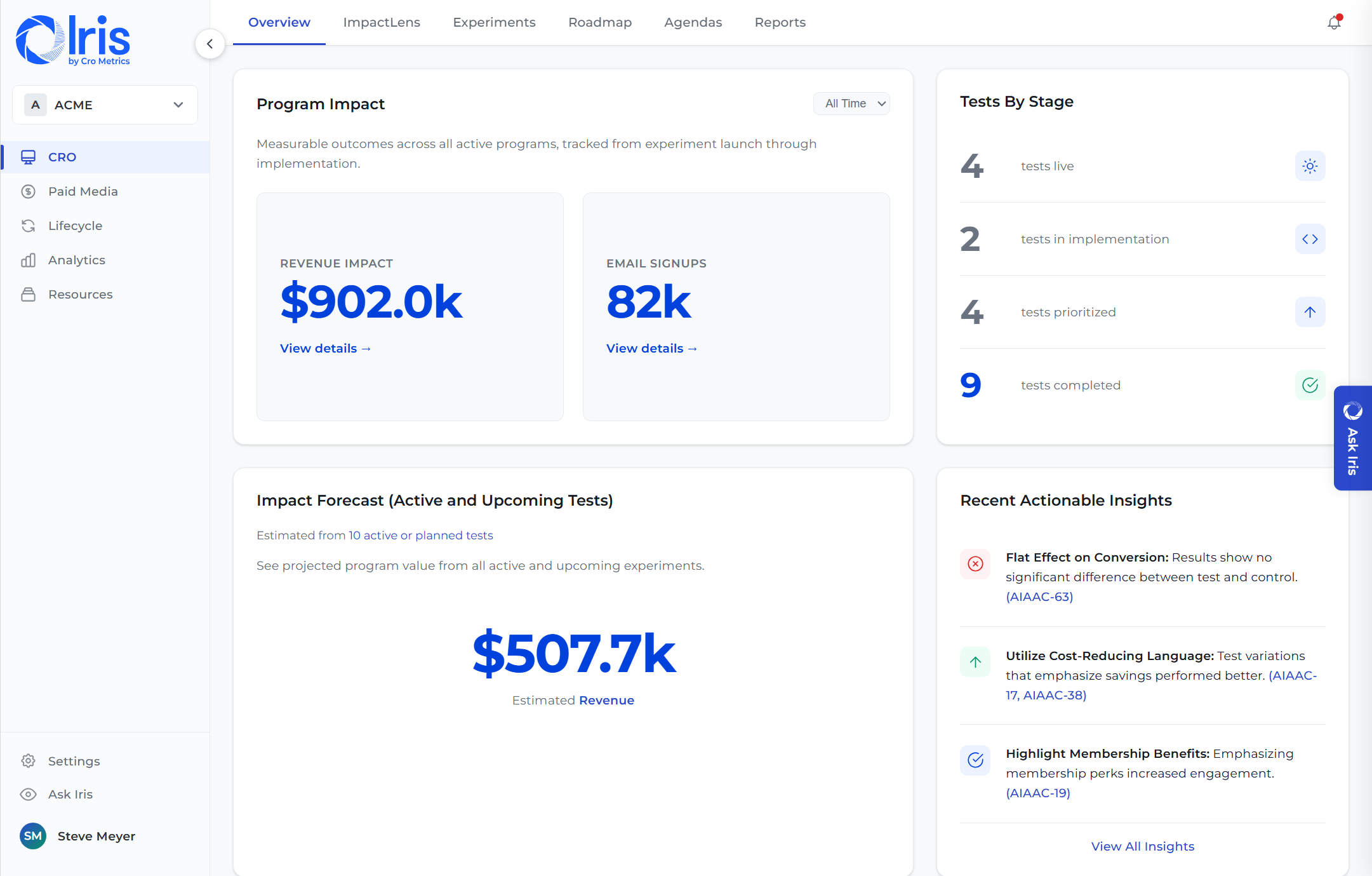

I conceived, designed, and led the development of Iris — a SaaS platform that manages the full experimentation lifecycle for 300+ clients and 12,000+ experiments. Here's the problem it solved, how I built it, and what it delivered.

The Experimentation Lifecycle

Experimentation at scale is a workflow problem. Iris solves it end-to-end.

Click any stage to see where Iris fits in. AI-powered capabilities are marked with a badge.

The Problem

Experimentation programs don't fail because of bad ideas. They fail because of bad infrastructure.

Cro Metrics runs experimentation programs for enterprise clients — companies like Zillow, Atlassian, Calendly, and Bombas. At any given time, we were managing hundreds of experiments across dozens of clients, each with its own hypotheses, specs, build requirements, QA cycles, and analysis reports.

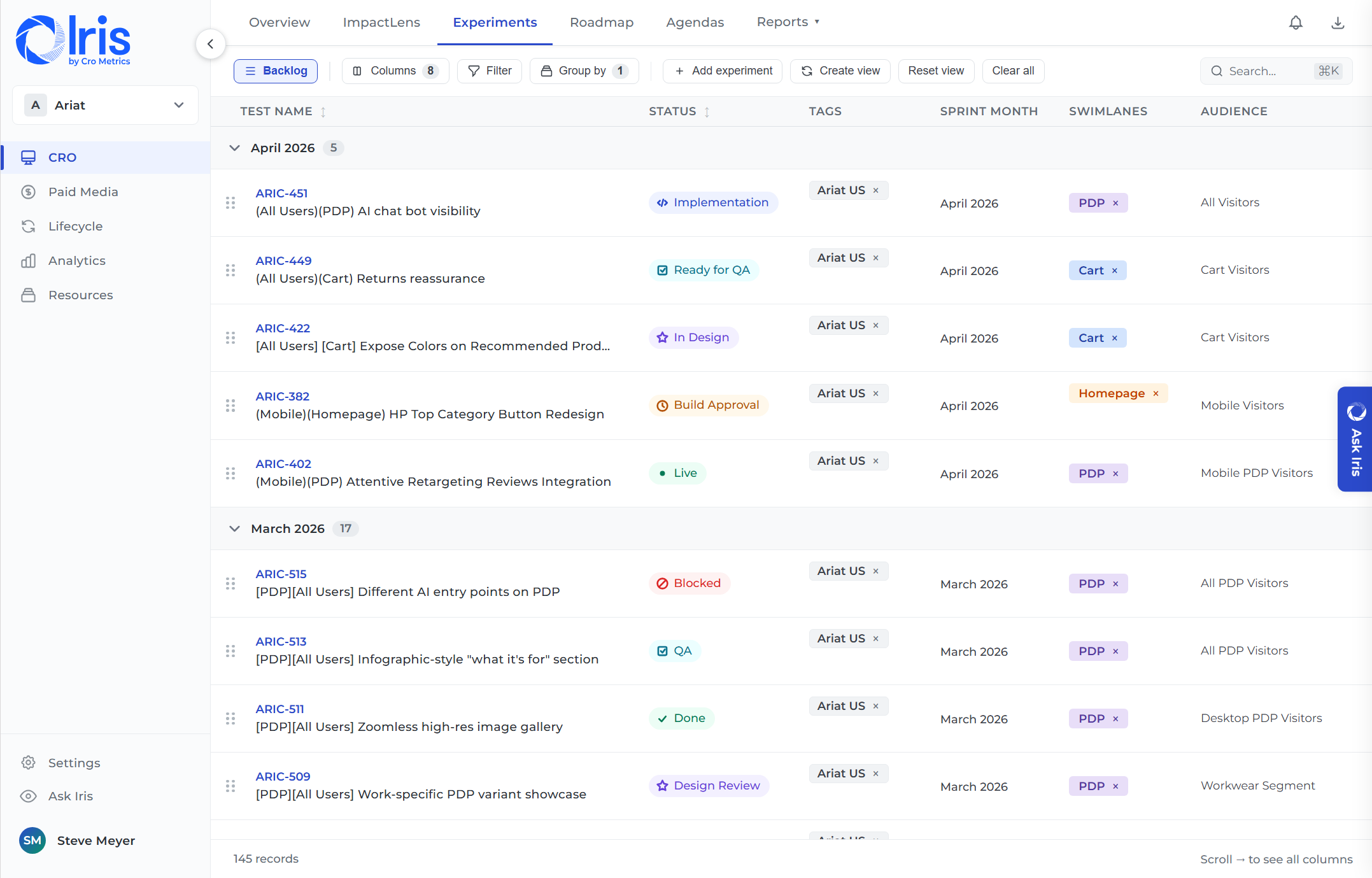

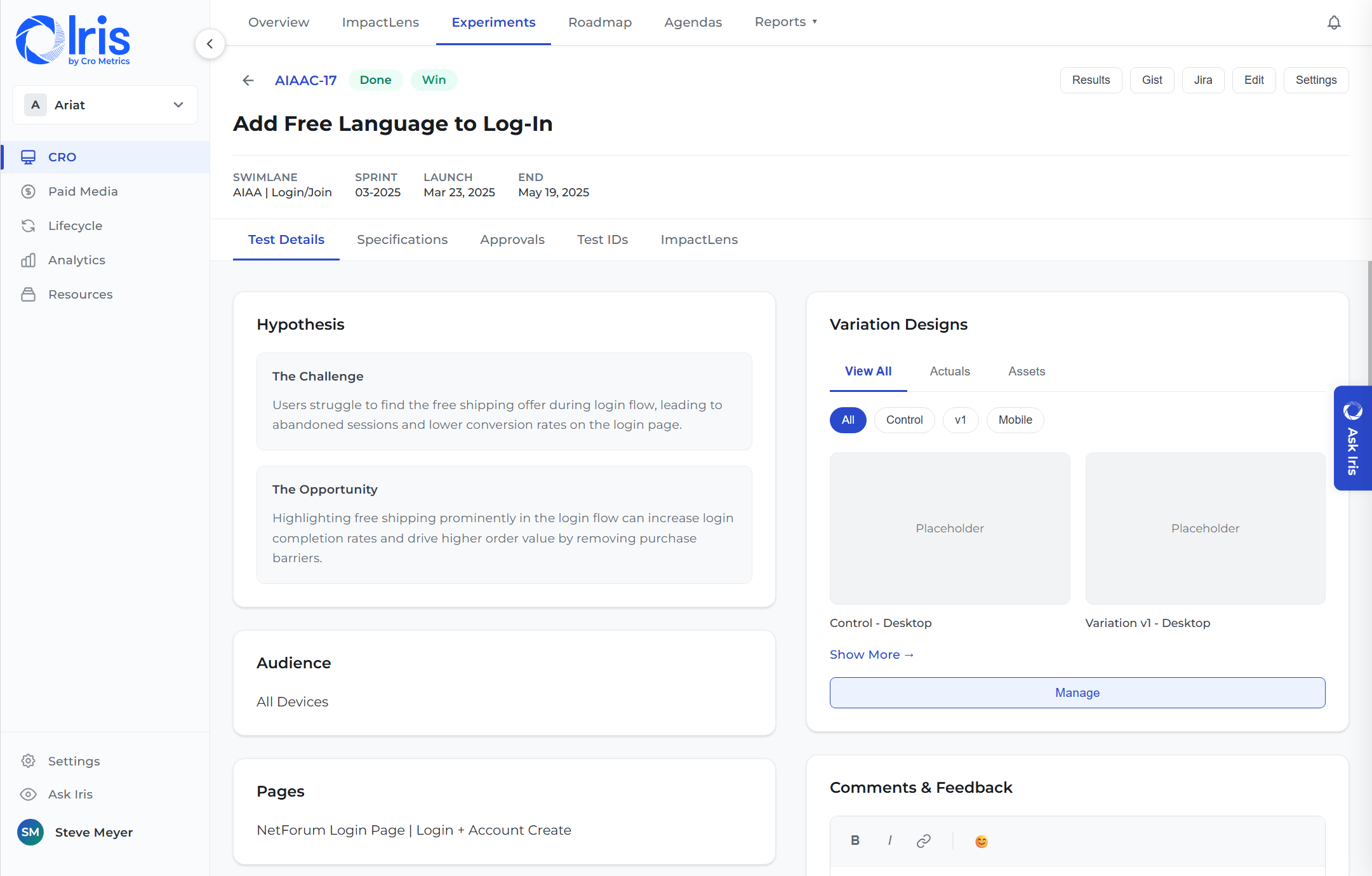

Before Iris, experiment data was scattered across Airtable, Jira, Google Sheets, and tribal knowledge. Program managers and growth strategists both felt the pain — PGMs spent hours on status tracking and reporting instead of operational improvements, while strategists couldn't find past learnings or move experiments efficiently between stages. When a new strategist joined a client account, there was no way to surface what had already been tested and learned.

The problem wasn't a lack of talent or testing ideas. It was that experimentation at this scale demands purpose-built tooling — and nothing on the market was designed for how agency-led experimentation programs actually work.

What I Built

A system of record for the entire experimentation lifecycle.

Iris is a web application that manages experiments from ideation through analysis and reporting. It's the single source of truth for every experiment — its hypothesis, specification, status, results, and learnings — across every client and program.

Core capabilities I defined and shipped:

My Role

Conceived it. Designed it. Led it from zero to production.

Iris started as a problem I kept running into while leading enterprise experimentation programs. I saw the same operational friction across every client — status tracking in spreadsheets, specs in docs, results buried in email threads. I pitched the concept to leadership, defined the product vision and initial feature set, and led it from first prototype to a production platform serving the entire client base.

As Director of Product, I own the roadmap, define priorities, and lead a 3-person product engineering team. I collaborate across design, management, client services, engineering/QA, and marketing/sales to align product decisions with business objectives. I also established the monetization model that turned Iris from an internal tool into a revenue-generating SaaS product.

How I Thought About It

Key product decisions that shaped the platform.

What Came Next

Iris became the foundation for AI-powered experimentation.

Once Iris established a structured data layer across 12,000+ experiments, it unlocked something bigger: the ability to build AI products on top of real experimentation data. That foundation led directly to two AI capabilities I built and shipped:

Building an experimentation platform?

I've done it from zero — product strategy, architecture decisions, team leadership, and scaling to hundreds of clients. Let's talk about what you're building.

Get in Touch