Ask Iris: An AI Agent That Does the Experimentation Work

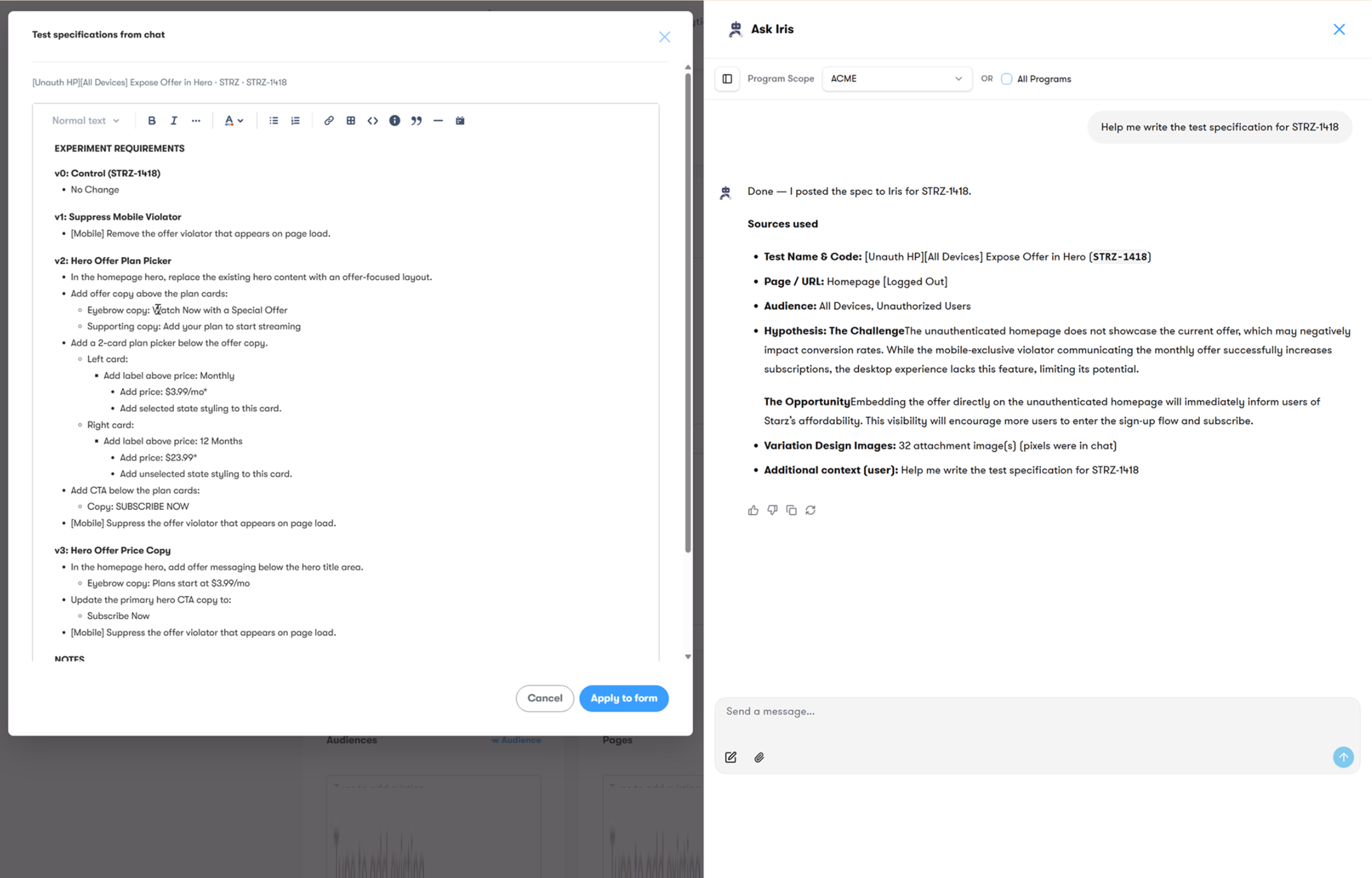

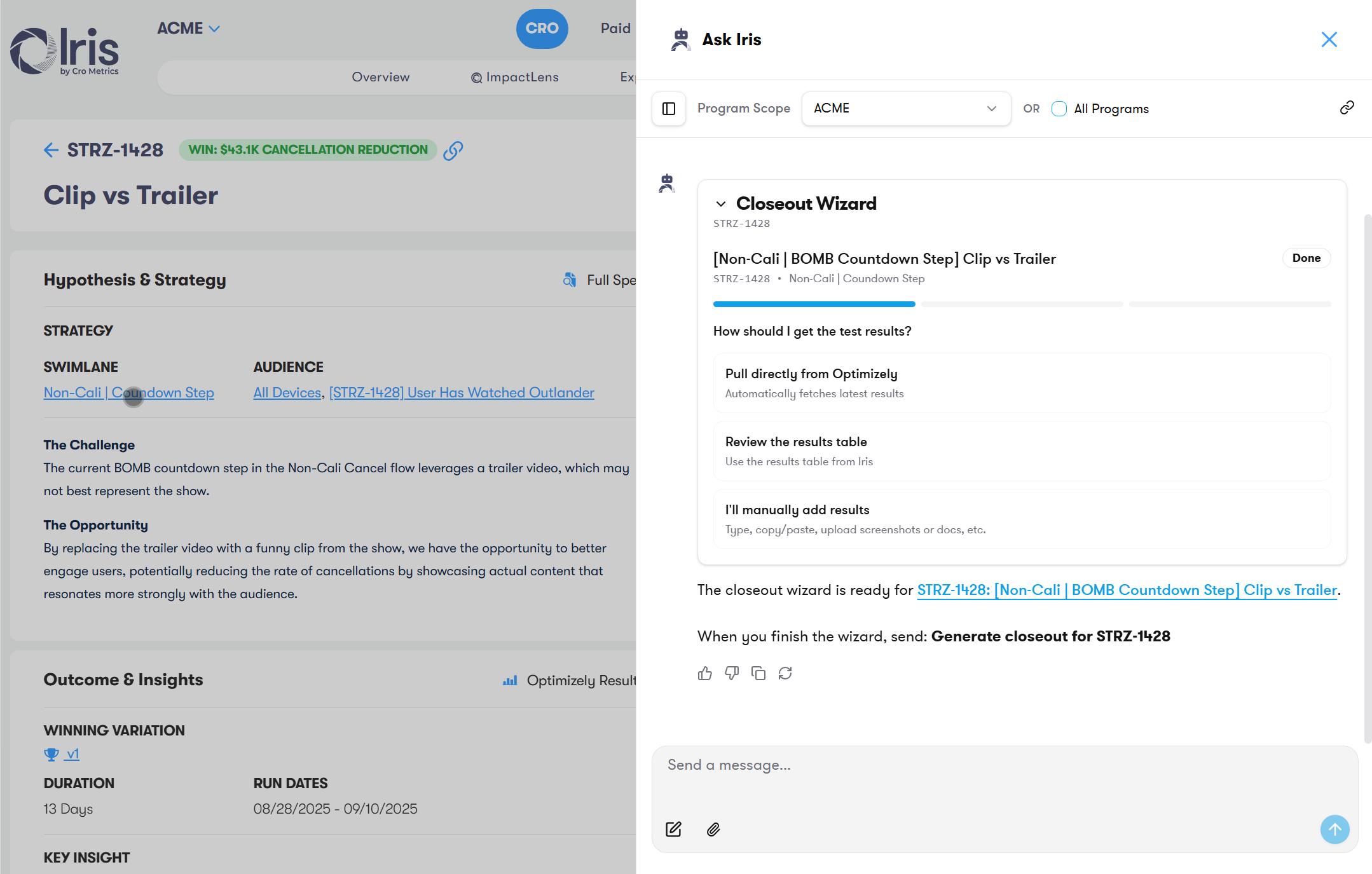

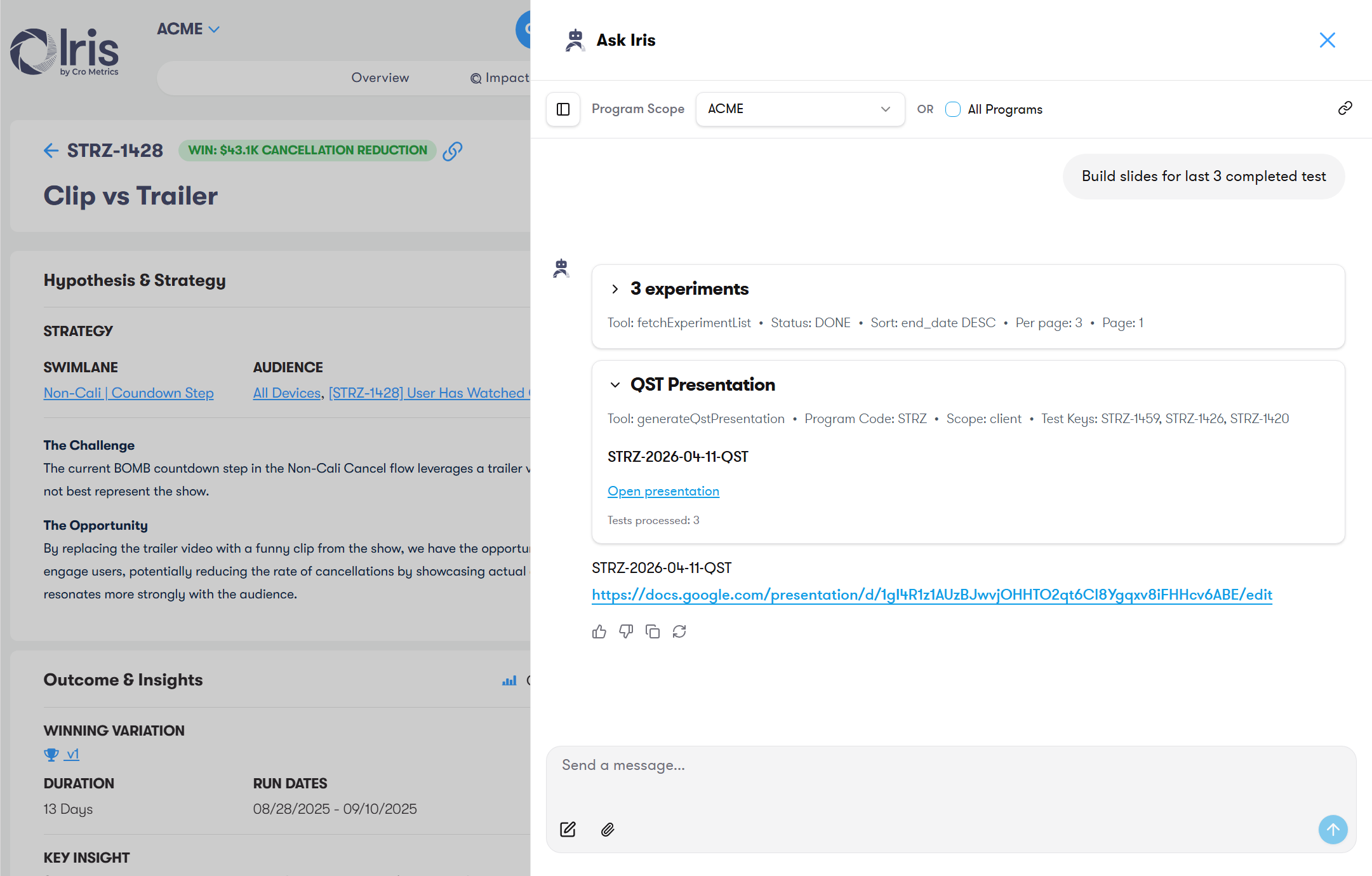

I designed and built Ask Iris — an agentic AI assistant embedded in the Iris platform that doesn't just answer questions about experiments, it runs workflows: writing specs, analyzing results, generating test ideas, and surfacing insights across 12,000+ experiments.

The Problem

How can we unlock 12,000 experiments worth of insights to improve CRO program performance?

Iris solved the operational problem — the testing workflow was streamlined and knowledge was accessible at a smaller scale. But when a program has over 1,000 past tests, and you want insights across programs and industries, using all of that data efficiently becomes a real challenge.

Strategists spent significant time digging through past experiments to avoid re-testing and to build novel strategies — manually reconstructing context that already existed somewhere in the system.

There was a second problem: repetitive tasks like spec writing and results analysis consumed hours per experiment, and quality varied depending on who did the work. Ask Iris didn't just make these faster — it standardized and improved the output.

What I Built

An AI assistant that acts, not just answers.

Ask Iris is an AI assistant embedded directly in the Iris platform. Users interact with it through natural language, but under the hood it's an agentic system with 17 purpose-built tools that can read, write, search, and take action across the entire experiment database.

The key distinction: Ask Iris doesn't just answer questions — it does work. It writes specs, analyzes closeout results, generates test ideas and prioritizes them using ImpactLens, captures screenshots for UX analysis, and searches a vector knowledge base. It's the layer that turns a breadth of experiment data into accessible, actionable insights.

17 tools across four capability areas:

Under the Hood

Production AI, built with intention.

Ask Iris runs as a Next.js application embedded in the Iris platform via iframe, with its own authentication layer, streaming infrastructure, and state management. I made deliberate architecture decisions to ensure it could operate reliably at scale across 300+ multi-tenant client environments.

Key architecture decisions:

My Role

Product vision, system design, and hands-on building.

Ask Iris was my initiative from concept through production. I identified the opportunity — that Iris's structured experiment data was an untapped asset for AI — pitched the vision to leadership, defined the product scope, designed the agent architecture, wrote the system prompts, and led the engineering effort to ship it.

This isn't a product I managed from a distance. I wrote the prompt architecture, defined every tool schema, designed the multi-step workflows for spec writing and closeout analysis, and built the adoption strategy that drove usage across the team. I work directly in the codebase alongside engineering — prototyping with AI-assisted development tools, debugging tool execution chains, and iterating on prompt behavior based on LangSmith traces.

The Foundation

Ask Iris exists because Iris exists.

The most important product decision behind Ask Iris was made years earlier: structuring experiment data in Iris so it could be queried, analyzed, and acted on programmatically. Without that structured data layer — 12,000+ experiments with consistent schemas for hypotheses, specifications, results, and learnings — there's nothing for an AI agent to work with. Ask Iris is the payoff of building the right foundation first.

Building AI products on top of real data?

I've designed agent architectures, written production prompt systems, and shipped AI tools that do real work — not demos. Let's talk about what you're building.

Get in Touch